AMD 3rd Gen EPYC Milan Review: A Peak vs Per Core Performance Balance

by Dr. Ian Cutress & Andrei Frumusanu on March 15, 2021 11:00 AM ESTDisclaimer June 25th: The benchmark figures in this review have been superseded by our second follow-up Milan review article, where we observe improved performance figures on a production platform compared to AMD’s reference system in this piece.

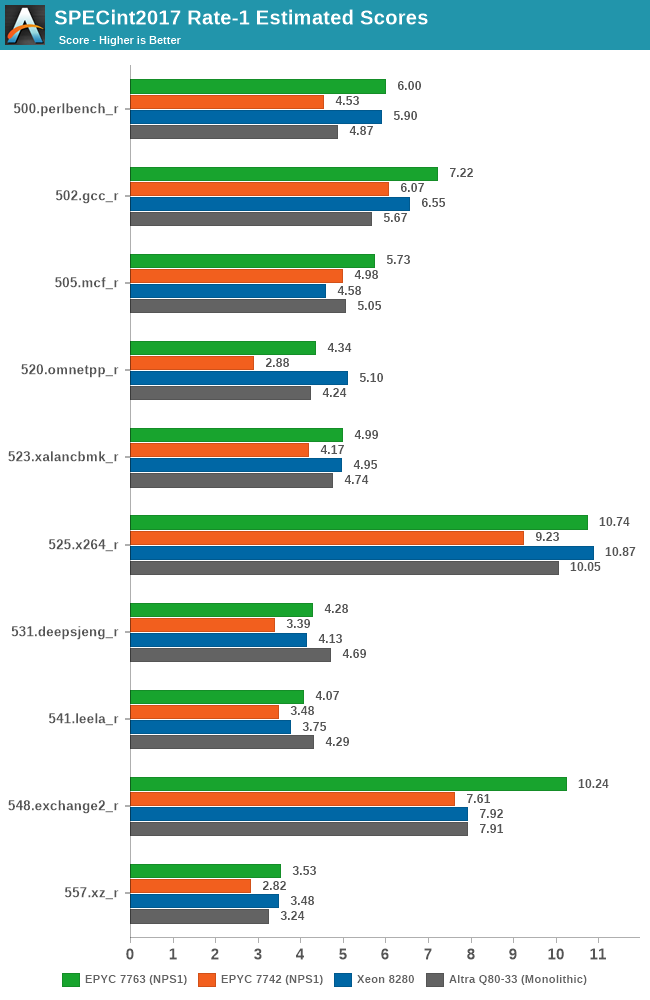

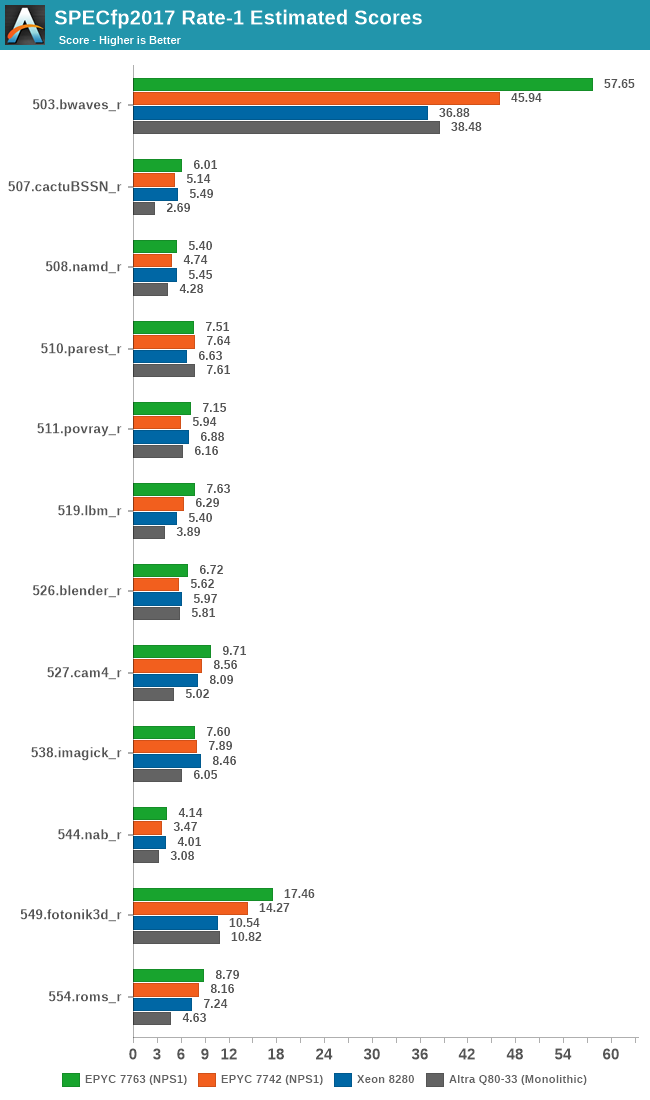

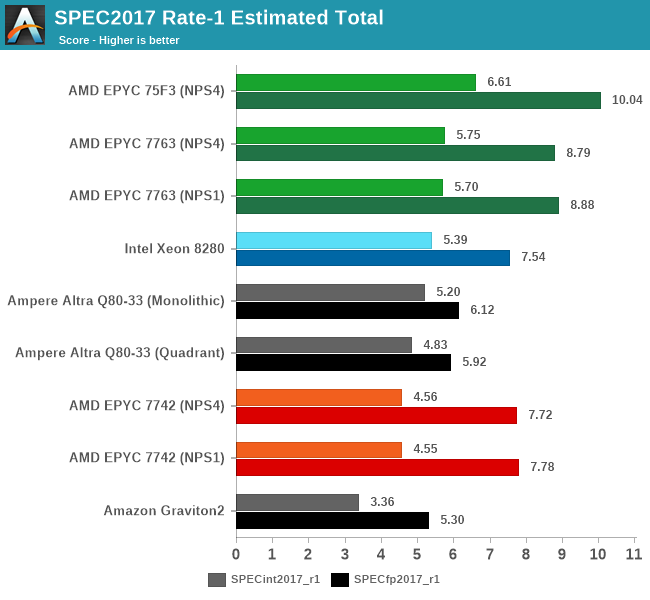

SPEC - Single-Threaded Performance

Single-thread performance of server CPUs usually isn’t the most important metric for most scale-out workloads, but there are use-cases such as EDA tools which are pretty much single-thread performance bound.

Power envelopes here usually don’t matter, and what is actually the performance factor that comes at play here is simply the boost clocks of the CPUs as well as the IPC improvement, and memory latency of the cores. We’re also testing the results here in NPS1 mode as if you have single-threaded bound workloads, you should prefer to use the systems in a single NUMA node mode.

Generationally, the new Zen3-based 7763 improves performance quite significantly over the 7742, even though I noted that both parts boosted almost equally to around 3400MHz in single-threaded scenarios. The uplifts here average over a geomean of +25%, with individual increases from +15 to +50%, with a median of +22%.

The Milan part also now more clearly competes against the best of the competition, even though it’s not a single-threaded optimised part as the 75F3 – we’ll see those scores a bit later.

In SPECfp, the Zen3 based Milan chip also does extremely well, measuring an average geomean boost of +14.2% and a median of +18%.

The new 7763 takes a notable lead in single-threaded performance amongst the large core count SKUs in the market right now. More notably, the 75F3 further increases this lead through the higher 4GHz boost clock this frequency optimised part enables.

120 Comments

View All Comments

aryonoco - Tuesday, March 16, 2021 - link

Thanks for the excellent article Andrei and Ian. Really appreciate your work.Just wondering, is Johan no longer inlvolved in server reviews? I'll really miss him.

Andrei Frumusanu - Saturday, March 20, 2021 - link

Johan is no longer part of AT.SanX - Tuesday, March 16, 2021 - link

In summary, the difference in performance 9 vs 8 for (Milan vs Rome) means they are EQUAL. Not a single specific application which shows more than that. So much for the many months of hype and blahblah.tyger11 - Tuesday, March 16, 2021 - link

Okay, now give us the new Zen 3 Threadripper Pro!AusMatt - Wednesday, March 17, 2021 - link

Page 4 text: "a 255 x 255 matrix" should read: "a 256 x 256 matrix".hmw - Friday, March 19, 2021 - link

What was the stepping for the Milan CPUs? B0? or B1?mkbosmans - Saturday, March 20, 2021 - link

These inter-core synchronisation latency plots are slightly misleading, or at least not representative of "real software". By fixing the cache line that is used to the first core in the system and then ping-ponging it between to other cores you do not measure core-core latency, but rather core-to-cacheline-to-core, as expressed in the article. This is not how inter-thread communication usually works (in well-designed software).Allocating the cache line on the memory local to one of the ping-pong threads would make the plot more informative (although a bit more boring).

mode_13h - Saturday, March 20, 2021 - link

Are you saying a single memory address is used for all combinations of core x core?Ultimately, I wonder if it makes any difference which NUMA domain the address is in, for a benchmark like this. Once it's in L1 cache, that's what you're measuring, no matter the physical memory address.

Also, I take issue with the suggestion that core-to-core communication necessarily involves memory in one of the core's NUMA domains. A lot of cases where real-world software is impacted by core-to-core latency involves global mutexes and atomic counters that won't necesarily be local to either core.

mkbosmans - Saturday, March 20, 2021 - link

Yes, otherwise the SE quadrant (socket 2 to socket 2 communication) would look identical to the NW quadrant, right?It does matter on which NUMA node the address is in, this is exactly what is addressed later in the article about Xeon having a better cache coherency protocol where this is less of an issue.

From the software side, I was more thinking of HPC applications where a situation of threads exchanging data that is owned by one of them is the norm, e.g. using OpenMP or MPI. That is indeed a different situation from contention on global mutexes.

mode_13h - Saturday, March 20, 2021 - link

How often is MPI used for communication *within* a shared-memory domain? I tend to think of it almost exclusively as a solution for inter-node communication.