Nehalem - Everything You Need to Know about Intel's New Architecture

by Anand Lal Shimpi on November 3, 2008 1:00 PM EST- Posted in

- CPUs

Further Power Managed Cache?

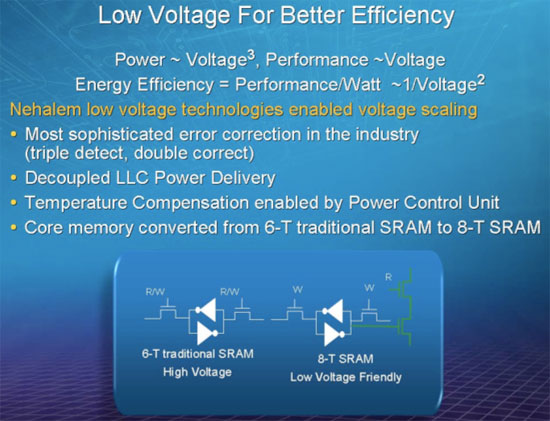

One new thing we learned about Nehalem at IDF was that Intel actually moved to a 8T (8 transistor) SRAM cell design for all of the core cache memory (L1 and L2 caches, but not L3 cache). By moving to a 8T design Intel was able to actually reduce the operating voltage of Nehalem, which in turn reduces power consumption. You may remember that Intel’s Atom team did something similar with its L1 cache:

“Instead of bumping up the voltage and sticking with a small signal array, Intel switched to a register file (1 read/1 write port). The cache now had a larger cell size (8 transistors per cell) which increased the area and footprint of the L1 instruction and data caches. The Atom floorplan had issues accommodating the larger sizes so the data cache had to be cut down from 32KB to 24KB in favor of the power benefits. We wondered why Atom had an asymmetrical L1 data and instruction cache (24KB and 32KB respectively, instead of 32KB/32KB) and it turns out that the cause was voltage.

A small signal array design based on a 6T cell has a certain minimum operating voltage, in other words it can retain state until a certain Vmin. In the L2 cache, Intel was able to use a 6T signal array design since it had inline ECC. There were other design decisions at work that prevented Intel from equipping the L1 cache with inline ECC, so the architects needed to go to a larger cell size in order to keep the operating voltage low.”

Intel states that Nehalem’s “Core memory converted from 6-T traditional SRAM to 8-T SRAM”. The only memory in the “core” of Nehalem is its L1 and L2 caches, which may help explain why the L2 cache per core is a very small 256KB. It would be costly to increase the transistor count of Nehalem’s 8MB L3 cache by 33%, but it’s also most likely not as necessary since the L3 cache and the rest of the uncore runs on its own voltage plane as I’ll get to shortly.

35 Comments

View All Comments

rflcptr - Wednesday, September 24, 2008 - link

The origin of Turbo Mode isn't Penryn, but rather Intel's DAT first found in the Santa Rosa mobile chipset, of which both Merom and Penryn were compatible (and functioning with the tech activated).hoohoo - Wednesday, September 10, 2008 - link

Outside of games the only area where raw performance matters to me is running high performance code - for me this is graphics processing and 3D rendering code, and it's just a hobby. I have some dealings with the HPC crowd though.In the HPC biz memory bandwidth is a big issue. AMD has won hands down on that metric against Intel until perhaps the past six months. Nehalem server chip looks like it will beat Opteron on this metric.

Another important metric for HPC is linear algebra performance. GPUs are very good at linear algebra, but GPUs have strange programming requirements for the people who understand scientific programming - these people want to worry about the science or engineering and not about the specific cache architecture of an Nvidia or ATI GPU.

Just because I could, last winter I wrote some 2D graphics processing routines for an 8800GT+CUDA+AthlonX2-5200: gaussian blur, sharp filter, the like. I achieved on the order of 20x speed improvement on the 8800GT vs the AthlonX2 all on Linux - but it was a moderately brutal programming experience and I doubt your average researcher will do it. And, well, the PCIe bandwidth bottleneck would be a problem for large scale batch processing for such a simple calculation.

I don't know about ATI GPUs yet. I got a 3870 eight months ago and installed the AMD HPC GPU SDK ('nuff acromyms for ya?), but I can't face the pain of using it if after booting all the way into XP I could be fragging away in HL2 or Q4, or conquering the world in Civ2 Gold instead - and nobody really uses Windows servers for HPC clusters anyway. I think about write Brook+ code on my 3870 sometimes but honestly I don't care that much. It'll be similar performance to the 8800, and it'll be *Windows* code.

If Intel can produce a chip that is somewhere between Larabee and Nehalem, matching memory bandwidth with an easily programmed but highly parallel chip then Intel will have an opportunity to define a new sub-market: HPC processors.

It is indicative of the deficiencies of AMD marketing that it has a good GPGPU and the only way to program it is on the one OS that HPC shies away from: Windows. Clusters mostly run on Linux or UNIX.

But, AMD is working on a CPU+GPU product that could compete in that market.

Which of AMD or Intel will realize that there is money to be made with a chip that combines 2 or 4 CPU cores + 4 or 8 GPU style linear algebra cores, all with IEEE double precision ability?

Whither the Cell?

:-)

hooflung - Monday, November 3, 2008 - link

I think you are minimizing what an operating system is. While it is true that Linux, AIX and Solaris account for a large number of HPC and cluster environments that doesn't mean Windows is poor in this regard.There are solid options for windows HPC where infiniband is very, very solid. Microsoft helped define the spec. However, it isn't done often enough in the public's eye like Linux. Remember, AIX and Windows were the most solid platforms for J2EE for a long, long time.

Also, Windows Clusters can be the better TCO solution for people. EVE-Online used Windows 2000 (now 2003 x86 and x64 ) and wrote their own load balancing software in Stackless ( and now have their own async IO stackless library ) which holds 33k at any given time of the day.

You really just have to decide what market you are trying to reach when considering OS choices. They all can provide similar performance.

Pixy - Friday, September 5, 2008 - link

All this sounds nice... but I have a question: when will laptops become fanless? The CPU is fast enough, work on turning down the heat!Davinchy - Tuesday, August 26, 2008 - link

I thought I read somewhere that if the other processor cores where not working then they shut down and the one that was working got more juice and overclocked.. So wouldn't that suggest that for the average consumer This chip will game much faster than a penryn?Dunno Maybe I read it wrong

jediknight - Sunday, August 24, 2008 - link

For desktop builders.My aging S754 Athlon64 is dying.. so it's time to start thinking of building a new one. My laptop can only hold me out for so long, though..

Will I be able to buy a quad-core Nehalem processor in about the $250-300 range by the end of the year?

UnlimitedInternets36 - Saturday, August 23, 2008 - link

Core i7 wins big time for 3D rendering, modeling, and CAD programs.Turbo is the best feature. I hope, at least in the Extreme Edition we can set the Turbo headroom to like 5Ghz!!! and have a totally dynamic over-clock scaling FTW!

Zbrush can utilize 256 processors, so I think a 2 socket Core i7 will help me out just fine. Sure is don't automatic boost FPS in games, but but that's partially a programming issue as well. Sooner or later the coding will catch up.

munyaka - Friday, August 22, 2008 - link

I have always stuck with amd but this is the final Nail in the coffin.X1REME - Friday, August 22, 2008 - link

Is there anything you see that we don't, please explain why?niva - Friday, August 22, 2008 - link

Well it is another step forward for Intel while AMD is still falling farther and farther behind the times. I want to caution that at this point there is no software actually optimized to run on i7 and any potential new instructions the chips will have. Once that happens and games are patched/recompiled or new games come out to take advantage of the massive CPU/memory bandwidth i7 offers it will be lights out.Waiting on AMD to come out with the next best thing is becoming really old. I have a Phenom system, I won't need a new one for at least another year or two but even though I wish AMD would do better they're just being dominated by intel right now.